|

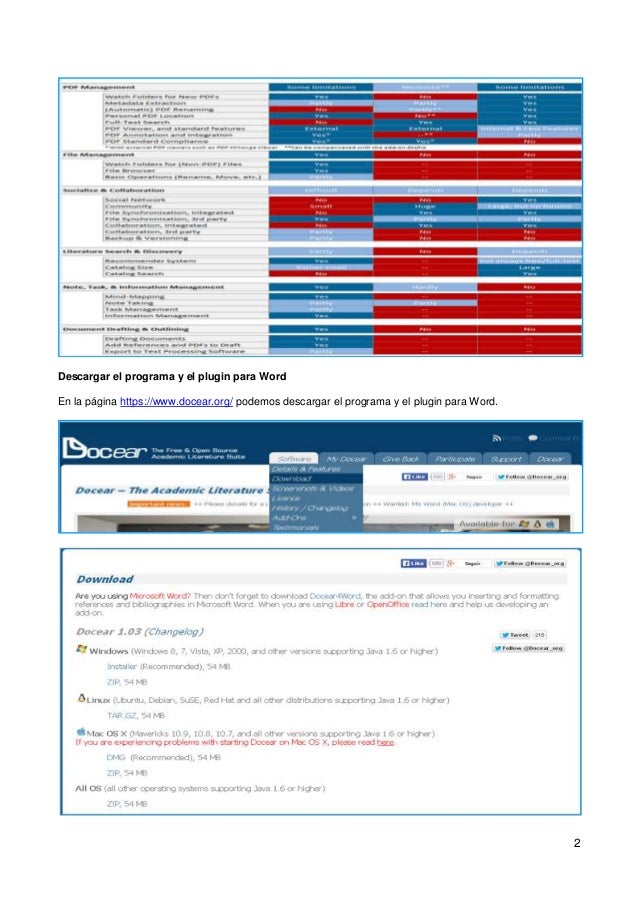

In the latter case, results of offline evaluations were worth to be published, even if they contradict results of user studies and online evaluations. We discuss potential reasons for the non-predictive power of offline evaluations, and discuss whether results of offline evaluations might have some inherent value. The evaluations show that results from offline evaluations sometimes contradict results from online evaluations and user studies. The approaches differed by the features to utilize (terms or citations), by user model size, whether stop-words were removed, and several other factors. We implemented different content-based filtering approaches in the research-paper recommender system of Docear. offline evaluations, online evaluations, and user studies, in the context of research-paper recommender systems. In this paper, we examine and discuss the appropriateness of different evaluation methods, i.e. However, recommender-systems evaluation has received too little attention in the recommender-system community, in particular in the community of research paper recommender systems. The evaluation of recommender systems is key to the successful application of rec-ommender systems in practice. We discuss these findings and conclude that to ensure reproducibility, the recommender-system community needs to (1) survey other research fields and learn from them, (2) find a common understanding of reproducibility, (3) identify and understand the determinants that affect reproducibility, (4) conduct more comprehensive experiments (5) modernize publication practices, (6) foster the development and use of recommendation frameworks, and (7) establish best-practice guidelines for recommender-systems research. Since minor variations in approaches and scenarios can lead to significant changes in a recommendation approach's performance, ensuring reproducibility of experimental results is difficult. For instance, the optimal size of an algorithms' user model depended on users' age. Some of the determinants have interdependencies. Determinants we examined include user characteristics (gender and age), datasets, weighting schemes, the time at which recommendations were shown, and user-model size. We found several determinants that may contribute to the large discrepancies observed in recommendation effectiveness.

For example, in one news-recommendation scenario, the performance of a content-based filtering approach was twice as high as the second-best approach, while in another scenario the same content-based filtering approach was the worst performing approach. The experiments show that there are large discrepancies in the effectiveness of identical recommendation approaches in only slightly different scenarios, as well as large discrepancies for slightly different approaches in identical scenarios. We conduct experiments using Plista's news recommender system, and Docear's research-paper recommender system. In this article, we examine the challenge of reproducibility in recommender-system research.

However, comparing their effectiveness is a challenging task because evaluation results are rarely reproducible. Numerous recommendation approaches are in use today. At the end of this paper, we discuss whether academic search engine spam could become a serious threat to Web-based academic search engines. The results show that academic search engine spam is indeed-and with little effort-possible: We increased rankings of academic articles on Google Scholar by manipulating their citation counts Google Scholar indexed invisible text we added to some articles, making papers appear for keyword searches the articles were not relevant for Google Scholar indexed some nonsensical articles we randomly created with the paper generator SciGen and Google Scholar linked to manipulated versions of research papers that contained a Viagra advertisement. To find out whether these concerns are justified, we conducted several tests on Google Scholar. Some were concerned researchers could use our guidelines to manipulate rankings of scientific articles and promote what we call ‗academic search engine spam‘. Feedback in the academic community to these guidelines was diverse. In a previous paper we provided guidelines for scholars on optimizing research articles for academic search engines such as Google Scholar.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed